Load Test HTTP Services with SLOs¶

Overview

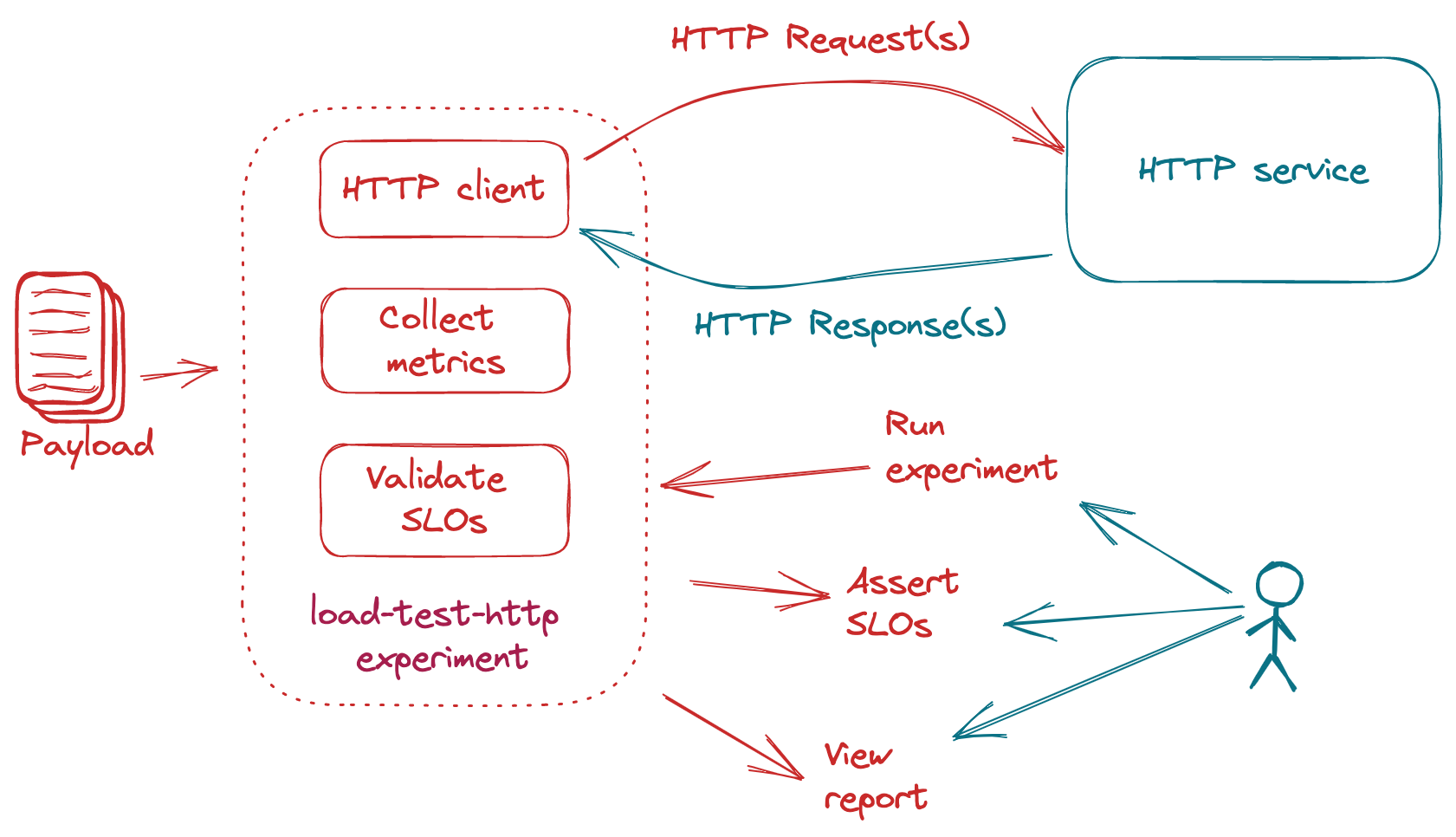

Iter8's load-test-http experiment chart can be used to generate requests for HTTP services, collect built-in latency and error-related metrics, and validate service-level objectives (SLOs).

Use-cases: Rapid testing, validation, safe rollouts, and continuous delivery (CD) of HTTP services are the motivating use-cases for this experiment type. If the HTTP service satisfies the SLOs, it may be safely rolled out, for example, from a test environment to a production environment.

Your first experiment provides a basic example of using the load-test-http experiment chart. This tutorial provides additional examples.

Before you try these examples

- Install Iter8.

- Run the httpbin sample app from a separate terminal. We will load test this app in the examples. You can also use Podman or other alternatives to Docker in the above command.

docker run -p 80:80 kennethreitz/httpbin - Download experiment chart.

iter8 hub -e load-test-http cd load-test-http

Load profile¶

Control the characteristics of the load generated by the load-test-http experiment by setting the number of queries (numQueries), duration (duration), the number of queries sent per second (qps), and the number of parallel connections used to send queries (connections).

iter8 run --set url=http://127.0.0.1/get \

--set numQueries=200 \

--set qps=10 \

--set connections=5

The duration value may be any Go duration string.

iter8 run --set url=http://127.0.0.1/get \

--set duration=20s \

--set qps=10 \

--set connections=5

When you set numQueries and qps, the duration of the load test is automatically determined. Similarly, when you set the duration and qps, the number of queries to be sent is automatically determined. If you set both numQueries and duration, the latter will be ignored.

Payload¶

Send any type of content as payload during the load test of HTTP POST endpoints, either by specifying the payload as a string (payloadStr), or by specifying a URL for Iter8 to fetch the payload from (payloadURL). You can also specify the HTTP Content Type header (contentType).

When payloadStr is set, content type is set to application/octet-stream by default.

iter8 run --set url=http://127.0.0.1/post \

--set payloadStr="abc123"

Set content type to text/plain.

iter8 run --set url=http://127.0.0.1/post \

--set payloadStr="abc123" \

--set contentType="text/plain"

Fetch JSON content from a URL. Use this JSON as payload. Set content type to application/json.

iter8 run --set url=http://127.0.0.1/post \

--set payloadURL=https://data.police.uk/api/crimes-street-dates \

--set contentType="application/json"

Fetch jpeg image from a URL. Use this image as payload. Set content type to image/jpeg.

iter8 run --set url=http://127.0.0.1/post \

--set payloadURL=https://cdn.pixabay.com/photo/2021/09/08/17/58/poppy-6607526_1280.jpg \

--set contentType="image/jpeg"

Metrics and SLOs¶

By default, the following metrics are collected by load-test-http:

request-count: total number of requests senterror-count: number of error responseserror-rate: fraction of error responseslatency-mean: mean of observed latency valueslatency-stddev: standard deviation of observed latency valueslatency-min: min of observed latency valueslatency-max: max of observed latency valueslatency-pX: Xth percentile of observed latency values, forXin[50.0, 75.0, 90.0, 95.0, 99.0, 99.9]

In addition, any other latency percentiles that are specified as part of SLOs are also collected.

Example¶

iter8 run --set url=http://127.0.0.1/get \

--set SLOs.error-rate=0 \

--set SLOs.latency-mean=50 \

--set SLOs.latency-p90=100 \

--set SLOs.latency-p'97\.5'=200

- In the above experiment, the following latency percentiles are collected and reported.

[25.0, 50.0, 75.0, 90.0, 95.0, 97.5, 99.0, 99.9]

- The following SLOs are validated.

- error rate is 0

- mean latency is under 50 msec

- 90th percentile latency is under 100 msec

- 97.5th percentile latency is under 200 msec

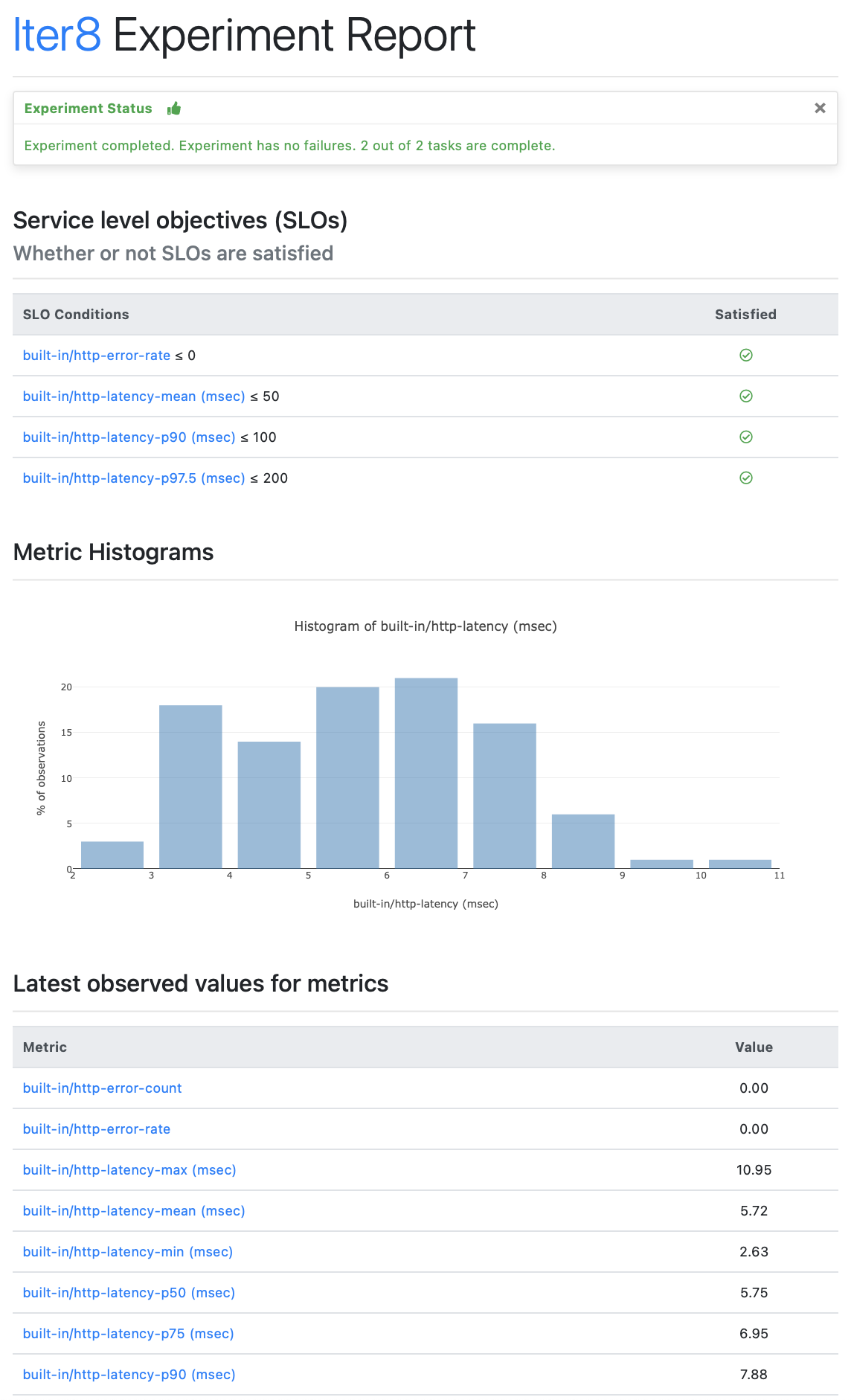

The Iter8 experiment report contains metric values, and SLO validation results.

iter8 report -o html > report.html

# open report.html with a browser. In MacOS, you can use the command:

# open report.html

The HTML report looks like this

iter8 report

The text report looks like this

Experiment summary:

*******************

Experiment completed: true

No task failures: true

Total number of tasks: 2

Number of completed tasks: 2

Whether or not service level objectives (SLOs) are satisfied:

*************************************************************

SLO Conditions |Satisfied

-------------- |---------

built-in/http-error-rate <= 0 |true

built-in/http-latency-mean (msec) <= 50 |true

built-in/http-latency-p90 (msec) <= 100 |true

built-in/http-latency-p97.5 (msec) <= 200 |true

Latest observed values for metrics:

***********************************

Metric |value

------- |-----

built-in/http-error-count |0.00

built-in/http-error-rate |0.00

built-in/http-latency-max (msec) |10.95

built-in/http-latency-mean (msec) |5.72

built-in/http-latency-min (msec) |2.63

built-in/http-latency-p50 (msec) |5.75

built-in/http-latency-p75 (msec) |6.95

built-in/http-latency-p90 (msec) |7.88

built-in/http-latency-p95 (msec) |8.50

built-in/http-latency-p97.5 (msec) |8.92

built-in/http-latency-p99 (msec) |10.00

built-in/http-latency-p99.9 (msec) |10.85

built-in/http-latency-stddev (msec) |1.70

built-in/http-request-count |100.00

Assertions¶

The iter8 assert subcommand asserts if experiment result satisfies the specified conditions. If assert conditions are satisfied, it exits with code 0; else, it exits with code 1. Assertions are especially useful within CI/CD/GitOps pipelines.

Assert that the sample experiment completed without failures, and all SLOs are satisfied.

iter8 assert -c completed -c nofailure -c slos

Sample output from Iter8 assert

INFO[2021-11-10 09:33:12] experiment completed

INFO[2021-11-10 09:33:12] experiment has no failure

INFO[2021-11-10 09:33:12] SLOs are satisfied

INFO[2021-11-10 09:33:12] all conditions were satisfied